Autonomous Trading Ethics and Explainable AI in Algorithmic Finance Dissertation Topics I phdassistance.com

Info: Autonomous Trading Ethics and Explainable AI in Algorithmic Finance Dissertation Topics I phdassistance.com

Published: 02th may in Autonomous Trading Ethics and Explainable AI in Algorithmic Finance Dissertation Topics I phdassistance.com

Share this:

Related Services

Our academic writing and marking services can help you

Introduction

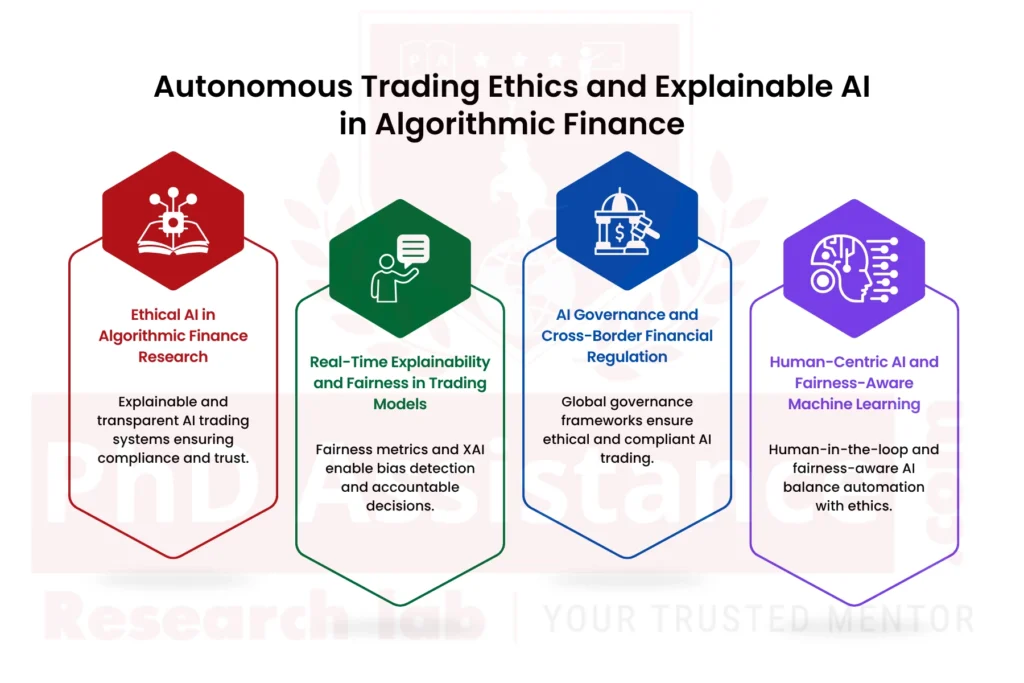

The researchers who study Ethical AI face challenges when they attempt to find PhD research topics because they must fulfil both academic requirements and industry expectations. The doctoral research topic must reflect actual market conditions together with current regulatory frameworks and ongoing artificial intelligence technology development. PhD Assistance provides professional guidance for choosing rese arch topics that contain both academic value and real-world applicability. The researchers investigate Ethical AI in Trading Systems through three dedicated research areas, which include finance explainable AI, algorithmic trading fairness assessment, bias detection, human-in-the-loop monitoring and autonomous trading system management. The team ensures each topic demonstrates current research trends and meets university standards and publication requirements. Our topic selection support and assists scholars in developing an innovative research direction through our expert domain knowledge.

Autonomous Trading Ethics and Explainable AI in Algorithmic FinanceDissertation Topics I phdassistance.com

Proposed PhD Topic 1: Developing Standardised Explainable AI Frameworks for Ethical AI in Trading and Transparent Algorithmic Finance

Background Context:

The usage of AI-driven algorithmic trading systems has resulted in challenges that threaten their operations and maintain their compliance with regulatory standards. High-frequency trading models require financial institutions to obtain instant prediction results, but these models deliver results that customers cannot comprehend. The research demonstrates that black-box models, which use deep learning and ensemble techniques, create a balance between operational efficiency and explainability for real-time financial systems. The existing explainability tools, SHAP and LIME, cause ethical issues in autonomous trading that need instant responses for additional computational power. Organisations require hybrid XAI frameworks that enable them to comply with legal requirements through their explanation capacity and rapid operational capabilities.

PhD-Level Verification:

The research investigates post hoc explanation methods and static financial systems, which encompass credit scoring and fraud detection. The field lacks research that focuses on explaining real-time trading systems that need to operate under tight time restrictions and make rapid trading decisions. The research gap exists because there are no XAI frameworks that provide scalable operations through minimal delays, which need to be solved for regulatory compliance and model performance optimisation.

Research Questions:

PhD-Level Contributions:

Suggested Readings:

Ayankoya, M. B. (2025). Explainable AI in Data-Driven Finance: Balancing Algorithmic Transparency with Operational Optimisation Demands.

Proposed PhD Topic 2: Building Scalable Explainable AI Systems for High-Frequency Trading and Rapid Financial Decision-Making

Background Context:

AI technology enables better prediction results and higher operational performance through its application in high-frequency trading and real-time financial decision-making. The advanced AI systems currently available function as black-box systems, which prevent users from understanding their internal workings. Financial market traders need to make fast decisions under tight time constraints, which makes it difficult to use explainable systems because their performance gets affected. The current implementation of XAI methods, which use SHAP and LIME, leads to additional processing requirements that make these methods unsuitable for use in active trading situations. The market needs advanced XAI systems that provide instant system comprehension to support traders in maintaining their work efficiency and prediction accuracy.

PhD-Level Verification:

Researchers study explainable artificial intelligence applications in finance through their work with credit scoring systems and fraud detection systems, while they ignore live trading systems. Financial markets need high-speed conditions because their existing research shows there is no operational explainability method that meets their performance requirements. The existing studies fail to establish a proper balance between making things understandable and achieving effective predictions, which creates a major research gap.

Research Questions:

PhD-Level Contributions:

Suggested Readings:

Khan, F. S., et al. (2025). Model-Agnostic Explainable Artificial Intelligence Methods in Finance: A Systematic Review, Limitations, and Future Directions.

Proposed Dissertation topic 3: Advancing Interdisciplinary Governance Models for Ethical AI in Trading and Cross-Border Financial Regulation

Background Context:

AI trading systems today execute trades across all global stock, currency, and digital asset markets, while different countries continue to enforce their own trading regulations. The independent systems create control gaps that result in missing system accountability, fairness assessment, and transparency evaluation, as well as their required legal obligations. Lee discusses the growing need for stronger AI regulation in financial services in the European Business Organisation Law Review (2020). The current framework for Ethical AI needs better interdisciplinary governance systems that connect regulators with developers, policymakers and institutions.

PhD Level Verification:

Existing literature often examines national AI regulations or single-market governance policies in isolation. The existing research does not provide enough information about collaborative frameworks that involve financial institutions, regulators and AI developers across different countries. The available research evidence about cross-border governance shows how it reduces ethical and compliance risks that arise from algorithmic trading.

Research Questions:

PhD-Level Contributions:

Suggested Readings:

Fantozzi, I. C., Santolamazza, A., Loy, G., & Schiraldi, M. M. (2025). Digital Twins: Strategic Guide to Utilize Digital Twins to Improve Operational Efficiency in Industry 4.0.

Proposed Dissertation Topic 4: Integrating Fairness-Aware Machine Learning to Reduce Bias in Trading Models and Improve Explainable Machine Learning in Finance

Background Context:

The autonomous trading systems gain their knowledge by studying historical market data and trading records because they assess price movements which reveal internal market system errors, hidden market trends and market inefficiencies. The process creates Bias in AI models, which results in unfair financial outcomes. Chakrabarti et al. in Journal of Financial Data Science (2025) emphasise that ethical AI frameworks are vital for trust, transparency, and compliance. The existing trading systems do not have unified models that can provide both fair treatment, transparent explanations and accurate prediction capabilities.

PhD-Level Verification:

Researchers study three model evaluation metrics, which include accuracy and fairness together with explainability, but they do not create a unified testing framework. Researchers have not yet developed sufficient research on Explainable machine learning in finance, which includes fairness mechanisms and operates under actual trading conditions with dynamic market changes. The existing models need more performance evidence, which shows their ability to operate in real financial market environments.

Research Questions:

Contributions at the PhD-Level:

Suggested Readings:

Fantozzi, I. C., Santolamazza, A., Loy, G., & Schiraldi, M. M. (2025). Digital Twins: Strategic Guide to Utilize Digital Twins to Improve Operational Efficiency in Industry 4.0.

Proposed Dissertation Topic 5: Designing Domain-Specific and Regulatory-Compliant Explainable AI Models for Transparent Financial Systems

Background Context:

Financial applications use artificial intelligence for credit risk assessment, fraud detection and portfolio management. AI-driven financial systems have made progress according to current technology, but transparency and accountability problems prevent users from establishing trust, which results in difficulties for regulatory compliance. The existing XAI models prove unsuitable for financial applications because they lack specific design elements needed in this field. Financial institutions must comply with strict regulatory frameworks yet existing explainability methods do not fully meet these legal and ethical requirements. The financial industry requires specific XAI frameworks that will create transparent, fair practices that fulfil compliance standards for their artificial intelligence systems.

PhD-Level Verification:

The existing research shows that current XAI frameworks do not offer financial institutions specific solutions that would address their operational needs and their requirements for complying with regulations and maintaining ethical standards. The research field lacks standard frameworks that can connect explainability requirements with existing financial policies and governance systems. The existing research gap demands the development of interdisciplinary methods that integrate AI technology with financial systems and regulatory frameworks.

Research Questions:

PhD-Level Contributions:

Suggested Readings:

Need assistance finalising your dissertation topic? Selecting a strong, researchable topic can be challenging — but you don’t have to do it alone.

Our research consultants can help refine your ideas, identify literature gaps, and guide you toward a topic that aligns with current academic trends and your programme requirements.

Contact us to begin one-on-one topic development and refinement with PhdAssistance.com Research Lab.

Share this:

Cite this work

PhDAssistance. (n.d.). Cybersecurity in business Dissertation Topics Retrieved January 28th, from https://www.phdassistance.com/topic/cybersecurity-business/

Jalolova, M., and Musawwir, M. “Cybersecurity in business Dissertation Topics for PhD Scholars.” PhDAssistance, https://www.phdassistance.com/topic/cybersecurity-business/ Accessed 28th January 2026.

Jalolova, M., and Musawwir, M., n.d. Cybersecurity in business Dissertation Topics for PhD scholars. [online] Available at: https://www.phdassistance.com/topic/cybersecurity-business/ [Accessed 28th January 2026].

Jalolova M., Musawwir M. Cybersecurity in business Dissertation Topics for PhD scholars [Internet]. PhDAssistance; [cited 2026 28th January]. Available from: https://www.phdassistance.com/topic/cybersecurity-business/

Jalolova, M., and Musawwir, M. (n.d.). Cybersecurity in business Dissertation Topics for PhD scholars. Retrieved 28th January 2026, from https://www.phdassistance.com/topic/cybersecurity-business/

Jalolova, M., and Musawwir, M., Cybersecurity in business Dissertation Topics (n.d.) https://www.phdassistance.com/topic/cybersecurity-business/ accessed 28th January 2026.

Study Resources

Free resources to assist you with your university studies!